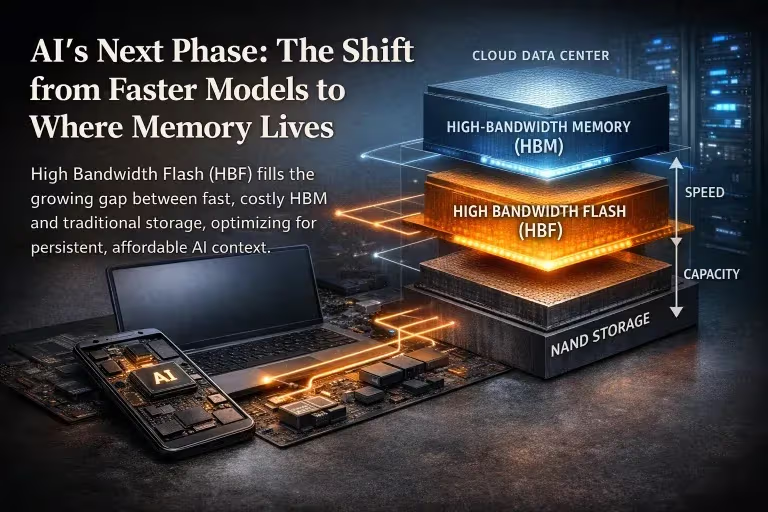

For much of 2025, Google presented Gemini as part of a broader vision of AI with fewer practical limits: larger context windows, stronger reasoning, and tools designed to move machine intelligence beyond the chatbot and into ordinary work. That promise still defines the official story. Google’s current documentation for Gemini 3.1 Pro advertises a 1,048,576-token context window and a maximum output of 65,536 tokens, figures meant to signal scale as much as capability.

The structure around that capability has become harder to ignore. Google now separates access across sharply different tiers, with Google AI Pro priced at $19.99 a month and Google AI Ultra at $249.99 a month, while support documents describe AI credits as a shared currency across products including Flow, Whisk, and Google Antigravity. In other words, the company is no longer selling Gemini simply as a model. It is selling a layered system of access, in which the most valuable forms of use are increasingly tied to premium plans, usage ceilings, and credit-based continuation.

That change is showing up in practice, not only in product pages. On Google’s own public developer forum, users have reported abrupt free-tier reductions, including a drop from 250 requests per day to 20, alongside recurring 429 errors that some said appeared even on paid configurations. Those reports do not amount to a formal company admission, and Google does not appear to have issued a matching public announcement. They do, however, capture a widening gap between headline capability and day-to-day usability as experienced by heavy users.

The pressure is easiest to see in the mechanics of ordinary work. A large context window matters less when long outputs become difficult to sustain cleanly. Agentic tools promise to remove manual effort, yet also enlarge the field in which usage can accumulate, especially once one instruction turns into scanning, sorting, revision, and retry across multiple steps. Google’s own support materials make clear that credits are now part of that wider ecosystem, not a marginal add-on. The result is a platform that still markets abundance while increasingly organizing serious use through tiers, thresholds, and metered extension.

Read narrowly, this is a story about one company refining the business model around a flagship AI system. Read more broadly, it reflects a familiar platform logic. AWS still describes cloud pricing in utility terms, comparing pay-as-you-go billing to water or electricity, and Alphabet has told investors to expect a sharp rise in infrastructure spending in 2026. Those two facts do not prove intent on their own. They do show the economic environment in which frontier AI is being sold: one shaped by large capital demands, finer-grained pricing, and strong incentives to segment access once dependence deepens.

The central question is no longer who can try advanced AI. It is who can rely on it continuously, at scale, and under budget once it becomes part of a newsroom workflow, a research pipeline, a product cycle, or a client-facing business. Gemini is one of the clearest places where that question is now being answered in public.

From Capability to Constraint

The practical divide does not begin on a pricing page. It begins in use.

Google’s public materials still present Gemini as a system built for scale. Vertex AI documentation for Gemini 3.1 Pro lists a 1,048,576-token maximum input and a 65,536-token maximum output. Google’s subscription pages pair that technical scale with a sharply tiered commercial structure, separating Pro from Ultra and reserving the “highest level of access” for the upper tier. On paper, the message is straightforward: large context, long output, advanced reasoning, and higher ceilings for users willing to pay for more.

Professional use imposes a stricter test. Large specifications matter only to the extent that they remain dependable once the model is folded into real work. A model can advertise a very large context window and still prove difficult to trust when a task has to hold its structure over a long draft, a technical brief, a multi-part analysis, or a report assembled under deadline. The relevant distinction is not between a strong model and a weak one. It is between headline capacity and dependable working range. Google’s own product architecture points in that direction: its subscription materials emphasize different levels of access, while Antigravity support documents describe a two-tiered usage system built around baseline quota and AI credits once those limits are exhausted.

That gap is easier to see in the workarounds heavy users describe. Jobs meant to be completed in one pass are split into smaller sections. Prompts are tightened or rewritten to keep the model on track. Outputs that should have finished cleanly are continued, patched, or restarted. The cost does not always appear as outright failure. More often it arrives as drag: another prompt, another pass, another round of supervision. On Google’s public developer forum, users have described sharp limit reductions and recurring 429 errors, including cases in which paid use still produced capacity-related interruptions. Those reports do not establish a single universal pattern, but they do show that practical usability can narrow well before the published ceiling is reached.

That drag carries financial weight. A partial answer leads to another query. A confused draft demands another round of repair. A task that looked simple at the start turns into multiple interactions, each one adding time and consumption. The system still appears available, often impressive in short bursts, and still capable enough to keep users inside the ecosystem. Difficulty shows up later, where work becomes more valuable and tolerance for interruption collapses. That is where the middle of the market becomes commercially important. The ordinary tier does not need to fail outright. It only needs to become unreliable often enough, and precisely enough, that serious users begin to feel the boundary between access and dependable use. Google’s own language already reflects that logic, distinguishing “higher access” from the “highest level of access” and tying continued use across parts of the AI stack to credits and usage systems.

Seen from that angle, the central question is no longer whether Gemini is powerful. The more revealing question is how much of that power remains stable once work becomes extended, repeated, and tied to production. That is the point at which capability stops being a headline and starts becoming a cost.

Agentic AI and the Expanding Cost of Use

Google’s own materials describe the new model family in terms that make the shift plain. Gemini 3.1 Pro is presented as better suited to “agentic workflows,” with improved token efficiency and more reliable multi-step execution, while Google’s support pages position Antigravity as a professional development environment for autonomous agents working across the editor, terminal, and browser. In product terms, the company is no longer selling a model that simply answers prompts. It is selling systems designed to plan, execute, verify, and continue across a larger share of the task.

That change matters because it alters the unit of consumption. A chat exchange is comparatively legible: a user asks, the model answers, the interaction ends or continues in visible turns. Agentic work is harder to read from the outside. A coding agent can scan files, trace dependencies, propose edits, test assumptions, revisit earlier steps, and continue iterating when the first pass fails. A research workflow can move through documents, cluster material, compare versions, surface ambiguities, and reopen the task as new questions emerge. What still feels like one request at the surface becomes a chain of operations underneath. Google’s own description of Antigravity as a system that lets users manage autonomous agents across multiple environments captures that broader scope of activity.

The pricing structure around those tools has moved in the same direction. Google One support documents say AI credits apply across Flow, Whisk, and Google Antigravity, and Google’s plan materials differentiate sharply between Pro and Ultra in both credits and access. Google AI Pro members receive monthly AI credits and can use them in Antigravity beyond baseline quota, while Ultra members receive far more credits and “the highest level of access” across Google’s AI offerings. That does not prove that every agentic action is being billed separately. It does show that Google’s broader AI ecosystem is no longer organized around a simple flat-fee intuition. Continued use now sits inside a layered structure of plans, baseline quota, credits, and higher-access tiers.

For users, the practical consequence is less about any single price point than about predictability. Once a team begins to rely on agents for drafting, revision, code maintenance, document analysis, or other multi-step work, spending becomes harder to estimate by instinct. A chat session that runs long is visible. A longer chain of agentic activity is not always as obvious while it is underway. Google’s own help materials frame credits as a way to “extend” usage beyond baseline quota in Antigravity, which underscores the basic design: professional-grade agentic work is expected to run into limits, and continuing past them is part of the product structure.

That makes agentic AI more than a feature upgrade. It widens the part of the workflow that can be measured, limited, and priced. The more fully a team folds these tools into ordinary production, the more the economic logic shifts from paying for answers to paying across the process that produces them. Google’s documentation does not hide that layered model; it formalizes it through quota, credits, and differentiated access. For serious users, the result is a system in which automation can reduce labor and expand budget exposure at the same time.

The Cloud Logic of Intelligence

None of this began with generative AI. The commercial pattern was already familiar in the cloud era.

AWS still describes its pricing in utility terms, comparing pay-as-you-go billing to water or electricity: customers pay for what they use, stop paying when they stop consuming, and can scale up without long-term commitments. That language helped normalize a broader shift in how businesses bought computing power. Access became easier at the front end; spending grew more granular as use deepened; budgeting moved from fixed purchase to continuous measurement. The service stayed open. The bill became more sensitive to how fully a team built around it.

AI is beginning to follow the same path, only much closer to the center of daily work. The cloud rented storage, compute, and scale. Frontier AI is increasingly rented as drafting, synthesis, coding assistance, analysis, and operational reasoning. The charge no longer sits only behind the scenes, attached to infrastructure a user never sees directly. It now reaches into tasks that shape decisions, output, and pace. That shift helps explain why Gemini should be read as more than a product story. Its premium tiers, usage ceilings, and credit-based extensions do not sit alongside the model as minor commercial details. They define the terms under which high-value use is sustained.

Google’s own structure makes that clear. The company’s subscription pages separate AI Pro from AI Ultra not only on price, but on access. Ultra is marketed as offering “the highest level of access” to Google’s most capable models and features, while support materials describe AI credits as a shared mechanism across Flow, Whisk, and Antigravity. In practical terms, Gemini is no longer offered simply as a model with a monthly fee attached. It sits inside a layered commercial system: plans, quotas, credits, and differentiated access for users who need the service to remain dependable under heavier workloads.

That arrangement offers an obvious advantage to the provider. It does not require any retreat from the language of openness, scale, or innovation. The product can still look generous at the start. Short bursts of use can still feel abundant. Most users can still believe they have meaningful access. The financial pressure gathers later, after the model has been folded into recurring work and the cost of interruption starts to rise. At that point, the important distinction is no longer between access and exclusion. It is between access that remains casual and access that can carry work without interruption.

The middle of the market becomes strategically valuable in exactly this way. A platform does not need to break its standard tier to steer users upward. It only needs to let friction accumulate where work becomes difficult to pause. Teams rarely upgrade because a benchmark chart tells them to. They upgrade because delays pile up, retries waste time, planning becomes shakier, and the cheaper path no longer feels dependable enough for work tied to clients, publication, or delivery. The leverage sits there, at the edge of tolerable interruption.

The broader financial setting helps explain why this logic is becoming harder to ignore. Reuters reported in February that Alphabet expects 2026 capital expenditures of $175 billion to $185 billion, nearly double the $91.45 billion it spent in 2025, while a separate Reuters report described Big Tech’s combined 2026 AI spending plans at roughly $600 billion. Those figures do not prove that any one limit or tier was introduced for a single reason. They do show the scale of the economic pressure now shaping how frontier AI can be sold, segmented, and extended.

That is the larger turn now underway. AI entered the market as a story about wider reach: more capability, less friction, broader access to powerful tools. As it settles into infrastructure, the governing logic looks more familiar. Broad invitation remains the public face. Metered reliance becomes the business underneath. The frontier is still open to visitors. Living there is getting more expensive.

The Cost of Staying Inside

The commercial terms become clearest only after the tool stops feeling optional.

At the beginning, the cost can still look manageable. A subscription is approved. A few people start using the model for drafting, research, document review, or coding help. Early gains are easy to name: faster first drafts, less manual sorting, fewer repetitive steps. Google’s own plan structure makes that entry friction relatively low, with Pro and Ultra positioned as broader-access memberships layered on top of the Gemini app and related tools. The harder question arrives later, once a team starts building ordinary work around the system and the service is no longer being sampled but expected.

By then, the monthly fee no longer tells the full story. Google’s support pages describe AI credits as shared across Flow, Whisk, and Google Antigravity, and Google AI Pro members are told they can use credits to extend sessions beyond baseline quota in Antigravity. That matters because it formalizes a transition from simple access to managed continuation. The product is not priced only at the point of entry. It is priced again when work runs long, when agentic use expands, and when the workflow needs to keep going without interruption.

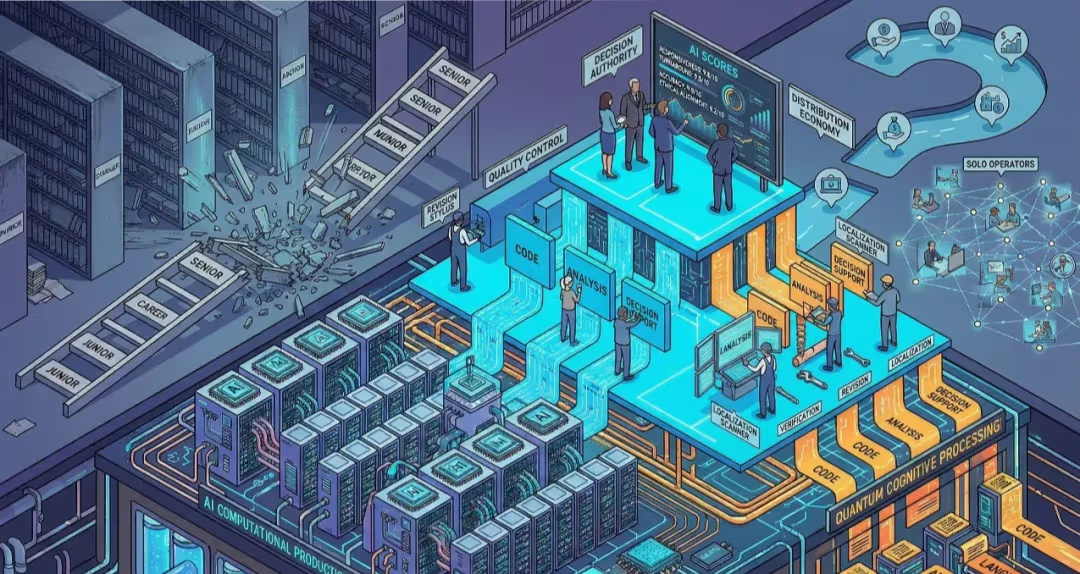

That structure changes the cost of stepping back. A team that has learned to move quickly with the model cannot scale down without losing speed, consistency, and output. Reporters who rely on the system to synthesize documents before writing, researchers who return to it to compare drafts and compress source material, product teams using it for repeated debugging and revision, consultants building it into memos and client-facing material, editors moving between notes, transcripts, outlines, and finished copy — all of them start to inherit a new kind of dependency. The model is no longer a shortcut sitting outside the workflow. It is part of the workflow. Once that happens, scaling back means rebuilding habits the system had begun to absorb. Google’s own materials for Antigravity reinforce that role by framing it as a daily development environment spanning editor, terminal, and browser rather than a one-off assistant.

The burden does not fall evenly. A large company can absorb higher tiers, credit overages, and rising usage as a normal cost of staying current. A smaller team feels the same structure much sooner. A newsroom with a thin budget does not need to hit a hard wall to change its behavior. It only needs to become less certain that a long synthesis will finish cleanly, that an agent will stay within budget, or that another round of work will not trigger another layer of spend. That is when the adjustments begin: long tasks are shortened before they start, exploratory work is narrowed, and automation is used more cautiously. The formal openness of the platform remains intact. The practical room to rely on it begins to diverge.

Those adjustments accumulate quietly. A research team may stop assigning the model to the full archive and narrow the corpus to what can be processed more safely. A product group may avoid broader agentic passes through a codebase because the cost of exploratory iterations has become difficult to predict. A consultant may break a project into smaller deliverables instead of keeping the model involved across the entire assignment. An editor may keep the system for early summarizing but pull back from longer structural work once retries and repairs begin to erase the time saved at the start. None of these decisions looks dramatic in isolation. Together they change the scale of what gets attempted.

That is how an open system starts sorting users without visibly excluding them. Most people can still sign up, still use the tools, still get results. The dividing line appears in the quality and duration of use different users can sustain. Some can treat advanced AI as dependable infrastructure inside production. Others have to keep watching limits, credits, and the cost of continuation while deciding whether the work is still worth doing at full scale. Access remains broad. Dependability becomes tiered.

The broader economics make that shift easier to understand. AWS still explains cloud pricing through the language of utility billing, and Alphabet has told investors that 2026 capital expenditure could rise to between $175 billion and $185 billion, nearly double the prior year. Those facts do not prove a single motive behind every limit or tier. They do show the environment in which frontier AI is now being sold: one shaped by very large infrastructure commitments and strong incentives to meter high-value use more precisely once customers begin to depend on it.

The frontier is still open in the narrowest sense. People can enter, test the system, and see what it can do. The larger advantage now lies elsewhere: with the users who can stay inside long enough to build with confidence, work at full scale, and keep a line of inquiry intact without trimming it to fit quota, credits, or cost. At that point, pricing stops looking like a background business detail. It becomes part of the public meaning of the technology itself.

Editorial Context

"Independent journalism relies on radical transparency. View our full log of editorial notes, corrections, and project dispatches in the Newsroom Transparency Log."

Reader Pulse

The report's impact signal

Be the first to provide a reading pulse. These collective signals help our newsroom understand the impact of our reporting.

Join the discussion

A more thoughtful conversation, anchored to the story

Atlantic-style discussion for this article. One-level replies, editor prompts, and moderation-first participation are now powered directly by Prisma.

Discussion Status

Open

Please sign in to join the discussion.

The Weekly Breeze

Independent reporting and analysis on Busan,

Korea, and the broader regional economy.