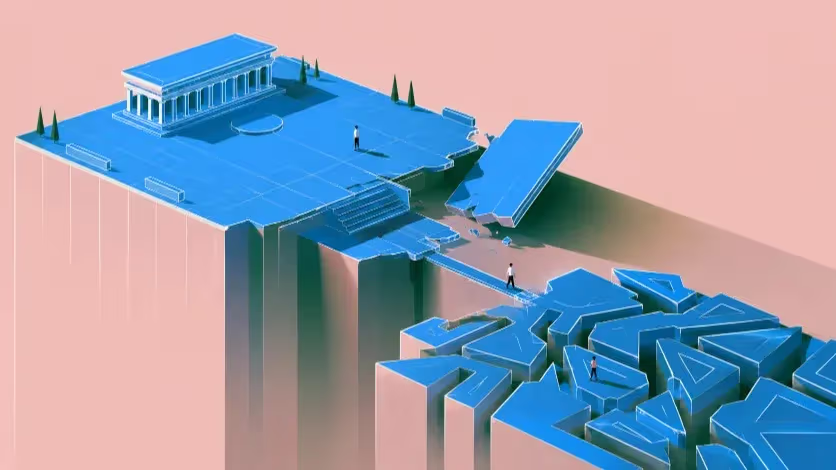

As artificial intelligence moves into classified military systems, the question cannot stop at whether humans remain in control. The deeper question is who is shaping these systems — and what war is teaching them.

AI Enters the Machinery of War

Artificial intelligence is no longer waiting at the edge of the battlefield. It is being integrated into the classified systems that help militaries analyze intelligence, plan operations and identify targets. In a May 1 release, the Pentagon said it had entered agreements with leading frontier AI companies, including SpaceX, OpenAI, Google, NVIDIA, Reflection, Microsoft, Amazon Web Services and Oracle, to deploy their capabilities on classified networks for lawful operational use. The department described the move as part of an effort to build an “AI-first” military, strengthen decision superiority and support warfighting, intelligence and enterprise operations.

The announcement was not simply another government technology contract. It marked a new stage in the relationship between frontier AI companies and the military state, because the same class of models that write code, summarize documents and answer questions for civilian users are now being adapted for environments where the stakes include surveillance, battlefield planning and the possible use of force. Reuters reported that the Pentagon is broadening the range of AI providers working across the military and integrating advanced AI capabilities into secret and top-secret network environments, while AP reported that the tools could help the military identify and strike targets more quickly and manage maintenance and supply lines.

The public language around these deals remains deliberately cautious. Technology companies speak of responsible use, lawful government purposes and human oversight, while military officials emphasize decision advantage, efficiency and national security. These phrases describe real constraints and real operational goals, but they also leave the central question unresolved. The question is no longer whether AI will reach the battlefield. It is what kind of military system AI will become part of, and what that system will teach it to value.

The Industry’s New Military Posture

For years, large technology companies tried to preserve at least some distance between commercial AI development and the most controversial instruments of military power. That distance was always incomplete: cloud infrastructure, cybersecurity, data analytics and defense contracting have long overlapped. But frontier AI changes the nature of the overlap. These models do not merely process data inside an existing system. They can help structure what users see, which questions they ask, what options appear plausible and how quickly decisions move.

The Pentagon’s new agreements show that the defense sector no longer treats AI as a peripheral tool. It wants frontier models inside classified networks, available to more users, integrated faster and supplied by multiple companies rather than a single provider. Reuters reported that newer AI entrants said the military had accelerated the process of incorporating vendors into secret and top-secret data levels to under three months, down from 18 months or more, and that the Pentagon described the expansion as a way to avoid vendor lock-in.

Anthropic’s absence from the Reuters-reported list made the announcement more revealing, not less. Reuters reported that the company had been excluded after the Pentagon labeled it a supply-chain risk amid a dispute over guardrails for military use of its AI tools. AP reported that Anthropic had sought assurances that its technology would not be used in fully autonomous weapons or domestic surveillance, while Defense Secretary Pete Hegseth argued that the company must allow any uses the Pentagon deemed lawful.

The details of that dispute are still contested, but the broader point is already clear: military AI is no longer only a technical category. It has become a field of corporate judgment, state pressure and political conflict. If an AI company can refuse some military uses, then defense deployment is not merely a matter of customer demand. If a government can exclude or pressure a vendor over the terms of military AI use, then the boundary between commercial AI and state power is being negotiated through contracts, procurement rules and classified systems.

Why Human Control Comes Too Late

The standard ethical defense of military AI begins with a promise: humans will remain in control. That promise matters, because no serious framework for military AI can dismiss the need for meaningful human judgment over the use of force. Autonomous weapons and AI-assisted targeting raise direct concerns under international humanitarian law, legal responsibility and human dignity.

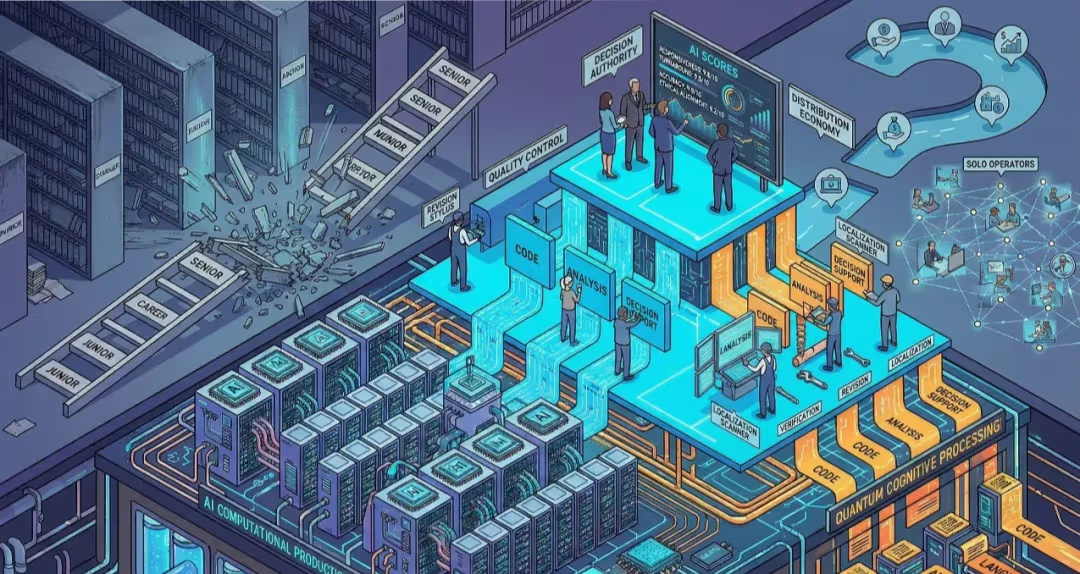

The problem is that human control often enters the debate too late. A human officer may remain formally present, but the AI system may already have decided what deserves attention, what appears urgent and what seems operationally reasonable. A model can summarize intelligence, rank possible threats, highlight patterns, generate operational options and attach confidence scores before a commander reaches the final approval stage. By then, the human is not judging from a neutral field. The human is judging inside a machine-shaped frame.

The International Committee of the Red Cross has warned that AI-assisted military decision-support systems are designed to help commanders process large amounts of battlefield information and make faster decisions, but that such systems also create ethical challenges when they shape military judgment, accelerate decision-making and affect accountability. The ICRC notes that these systems are often described as tools to support rather than replace human planners, yet they may still have subtle and powerful effects on military decisions.

That is why “human in the loop” can become a weak phrase when it is used without operational detail. It tells the public that a person remains somewhere in the chain, but not whether that person has enough time, evidence, authority and training to challenge the system. It does not reveal whether the interface makes uncertainty visible or hides it behind a single score. It does not show whether military workflows reward restraint or speed. A human can approve a decision without having meaningfully formed it; a human can remain accountable on paper while the system has already narrowed the field of imagination.

The ethical question, then, is not only who presses the final button. It is who built the conditions under which that button became easier to press.

AI Is Formed Before It Is Used

Military AI is often described as a question of use. The same model, companies argue, can serve many purposes: it can draft an email, summarize a maintenance report, analyze a logistics bottleneck or support a lawful government operation. On this view, the technology is general and responsibility lies primarily with the user.

That framing is too narrow, because AI systems are not only built by engineers; they are formed by the institutions that train, tune, purchase and deploy them. Data determines what they see, feedback determines what behavior is rewarded, contracts define acceptable uses, safety policies set boundaries, and deployment environments decide who can inspect the system, who can modify it and who must live with its consequences.

The military does not simply use AI after it is built. It helps define what the system is for. In civilian settings, success may mean productivity, convenience, customer satisfaction or faster information retrieval. In military settings, success means something else: decision advantage, threat detection, force protection, target identification, operational speed and command efficiency. These priorities are not accidental; they are the values of the institution.

That does not mean every military use of AI is ethically identical. A logistics system that routes medical supplies does not raise the same moral question as a tool that ranks people for attack. A maintenance model that predicts equipment failure does not belong in the same category as a system that processes intercepted communications for operational action. But precisely for that reason, the distinctions must be made explicitly rather than hidden under the broad and reassuring label of “military AI.”

The danger begins when broad language hides proximity to force. A system called “decision support” may sit far from coercive action, or it may sit directly beside targeting. A model described as an intelligence tool may help analysts understand information, or it may help nominate targets. A system called advisory may function as authoritative if officers are trained to trust it, pressured to act quickly or denied the time to examine alternatives.

This is where the parenting lens in AI ethics becomes useful, not because it asks us to pretend that AI models are children, but because it asks us to examine formation. Learning systems are fed, corrected, rewarded and released into the world by institutions with specific goals, and those institutions do not merely use AI after the fact. They help shape the conditions under which AI becomes useful.

The Parenting Lens Is Not a Metaphor About Children

Sky Croeser and Peter Eckersley’s 2019 paper, “Theories of Parenting and Their Application to Artificial Intelligence,” argues that AI ethics can benefit from radical and queer theories of parenting, especially as machine-learning systems gain more power over human lives and as more autonomous agents become imaginable. Their point is not that machines deserve childhood, but that the development, design, training and release of increasingly autonomous systems should be examined through the lens of formation, care and responsibility.

The word “mothering” can weaken this argument if it is used carelessly. It can sound sentimental, gendered or unserious, and it can suggest that AI systems are children, that women have a special ethical relation to machines, or that military AI can be softened by warmer language. None of those claims belongs at the center of this debate.

In the relevant theoretical context, mothering does not mean maternal instinct and does not give machines moral innocence. It refers to a tradition of care, formation and non-domination that asks how power shapes the future and how those who shape vulnerable systems, persons or environments answer for the consequences of that formation. Applied to AI, that idea gives developers, companies and governments fewer places to hide. If a learning system is shaped by data, incentives and deployment environments, then the actors who control those conditions are not passive suppliers; they are formative agents.

A large language model does not suffer when it is retrained, and a targeting-support system does not become ethically mature because it receives better feedback. The responsibility does not belong to the machine as if it were a child. The responsibility belongs to the institutions around it.

Care ethics gives this argument a sharper vocabulary. Joan Tronto’s model links care to attentiveness, responsibility, competence and responsiveness, treating care not as a feeling but as a practice of recognizing need, taking responsibility, acting competently and responding to the reaction of those affected.

For military AI, that shift is decisive because it moves the center of analysis away from the user and toward the person exposed to the system’s power. The user may be a commander, analyst or defense agency; the exposed person may be a civilian classified, tracked or targeted by a system they cannot inspect, challenge or refuse. That distinction is the difference between “responsible use” as a supplier’s phrase and responsibility as a political relation.

Classification Comes Before the Trigger

Military AI does not begin at the trigger; it begins with classification. Before a strike can be recommended, a person, location, signal or pattern must be made legible to a system: a behavior becomes relevant, a place becomes linked to risk, a body becomes part of a category. The machinery of force depends on this earlier act of sorting.

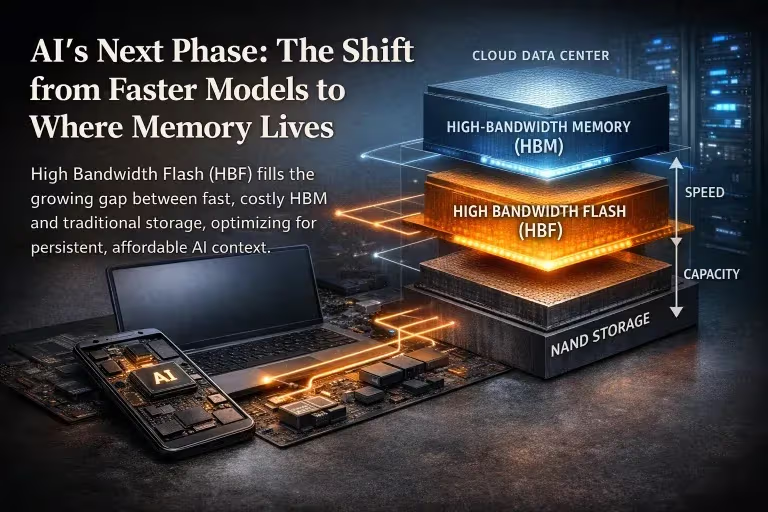

Armies have always used maps, informants, surveillance images, intelligence files and threat assessments, so AI does not invent military classification. It changes the scale, speed and apparent authority of classification by combining sensor feeds, communications data, imagery, movement patterns and social connections, then turning uncertainty into scores and scores into action priorities.

A score is not only information. It is an instruction about attention. When a system ranks potential threats, it tells human users where to look first; when it flags a pattern as suspicious, it narrows the field of interpretation; when it assigns confidence to a recommendation, it gives institutional form to doubt. These operations do not kill by themselves, but they prepare the ground on which killing can become easier to justify.

The danger lies in the conversion of human context into operational categories: a man becomes a movement pattern, a home becomes a node, a neighborhood becomes a risk area, a phone signal becomes a proxy for identity, and a social connection becomes evidence of proximity to danger. Each step may appear technical, but together they can move a person from the domain of civilian life into the domain of military suspicion.

This is why precision is an incomplete defense. Military AI is often justified through the promise of better intelligence and fewer mistakes, and those goals cannot be dismissed because better information can reduce indiscriminate violence. But precision does not answer the prior question of classification. A system can be precise within a category that should not have been constructed in the first place.

The ICRC has warned that AI-based decision-support systems are increasingly used to support target identification, selection and engagement, and that reliance on these technologies can risk exacerbating civilian suffering. The same analysis stresses that military AI use extends beyond autonomous weapons and that data, not robotics alone, is becoming a critical enabler of contemporary targeting systems.

Care ethics starts from the person before the category. It asks what is lost when a life is translated into indicators of risk, who has the power to define suspicious behavior, whether civilians become more vulnerable when their ordinary movements are absorbed into military datasets, and whether the people most exposed to error have any way to be seen as more than the system’s classification of them. That is why military AI cannot be judged only by whether it fires a weapon. A system may remain formally advisory and still reshape the moral field. It may not choose a target on its own, but it can help produce the target as an object of action.

Speed Is Not a Neutral Improvement

Military institutions do not adopt AI only because it can classify. They adopt it because it can compress time. A system that can process battlefield reports, satellite images, drone footage, intercepted signals and sensor data faster than human analysts appears to offer a decisive advantage: it can shorten the distance between detection and response, help commanders see patterns earlier and allow action before an adversary moves.

In war, speed looks responsible. A missed signal can kill soldiers, a slow response can allow an attack to unfold, and a commander who waits too long can lose the initiative. The Pentagon’s own language emphasizes decision superiority and faster operational use, while Reuters reported that the military sees AI tools as useful for planning, logistics, targeting and other operations that streamline large-scale military activity.

But speed is not a neutral improvement. It changes the conditions under which judgment takes place. Some forms of judgment require friction: a legal review takes time, a proportionality assessment takes time, a challenge from an analyst who distrusts the data takes time, and a commander’s hesitation before using force takes time. AI systems that reduce delay may also reduce the space in which doubt can do its work.

The ICRC has noted that AI decision-support systems are often presented as tools for faster and enhanced decision-making cycles, but that these claims must be assessed against the actual ethical effects of such systems on human autonomy, responsibility and judgment. Faster analysis does not automatically produce better judgment.

A human reviewer without time is not a safeguard, and a human reviewer without access to uncertainty is not a judge. The same problem appears in the language of efficiency. AI can make intelligence work faster, reduce cognitive burden and place scattered information into a single operational picture, and these functions are not inherently illegitimate. In some cases, they may help avoid mistakes. But efficiency becomes dangerous when the task involves deciding who can be watched, pursued or killed.

A faster hospital triage system and a faster targeting system do not raise the same ethical question, even if both classify urgency and allocate scarce attention. One is organized around preserving life; the other is organized around the possible use of force. The same technical virtue carries different moral weight depending on the institution that uses it.

War has always rewarded speed. AI gives that old reward a new technical infrastructure. The ethical question is whether societies will allow the pursuit of decision advantage to outrun the slower obligations of law, accountability and care.

Responsibility Becomes Harder to Locate

Military AI also multiplies the number of actors who can claim that responsibility lies somewhere else. A company can say it supplied a general-purpose model; a defense agency can say it used the model within lawful parameters; a commander can say the system only supported a human decision; an operator can say the output came from a validated tool; an engineer can say the deployment context was outside the training environment. Each statement may contain part of the truth. Together, they can produce a structure in which responsibility is everywhere and nowhere at once.

This is one of the most serious dangers of AI in military systems. The harm may be immediate, but accountability becomes distributed, delayed and classified. A civilian harmed by a machine-shaped decision cannot easily identify which part of the system failed: whether the data were outdated, the model was overconfident, the interface hid uncertainty, the commander misunderstood the output, the procurement contract rewarded speed over scrutiny, or the company knew its safeguards could be weakened in a classified environment.

The more complex the system becomes, the easier it is for each institution to describe itself as only one part of the chain. That logic is familiar in civilian AI. When a hiring algorithm rejects applicants, a company may blame the vendor, the vendor may blame the training data, the data provider may blame historical patterns and the user may blame the software. The person harmed by the decision meets a wall of partial explanations.

In military AI, the same structure operates under secrecy, urgency and force. A civilian classified as suspicious cannot request an explanation before a strike. A family harmed by an operation may not know whether an AI tool played any role. Journalists and humanitarian investigators may face classified records, national-security exemptions and systems that even their operators cannot fully explain.

This is why lawful use is too thin as a corporate defense. Lawfulness matters, but it does not exhaust responsibility. A company that supplies AI to military systems helps shape the conditions under which force is considered, accelerated and justified. If that company knows its model may support intelligence analysis, operational planning or targeting, it cannot treat downstream harm as merely a customer problem.

The same applies to governments: a defense agency cannot preserve accountability by placing a human officer at the end of a process structured by opaque systems. If commanders rely on AI-generated rankings, summaries or recommendations, the government must be able to explain how those outputs were produced, what uncertainty they carried and how human reviewers were trained to challenge them. Responsibility requires more than attribution after harm. It requires design before harm.

Care Is a Stricter Standard Than Safety

Care, in this context, is not a softer synonym for safety. It is a stricter standard. Safety often begins with the system: whether the model performs reliably, refuses prohibited outputs, stays within policy and behaves as expected under test conditions. These questions matter, but they keep the center of gravity inside the technology and treat harm as something that happens when a system malfunctions.

Care begins elsewhere. It begins with the person or community exposed to the system’s power, asking who becomes visible to the model, who becomes vulnerable to its errors, who can contest its outputs and who must live with the consequences after the operator logs off. That shift changes the governance standard for military AI.

Governments and companies should classify military AI functions publicly, rather than hiding administrative support, logistics, translation, intelligence analysis, targeting support and lethal decision support under one broad category. The ethical burden should rise as a system moves closer to coercion or force. High-risk systems should face a reversed burden of proof: the public should not have to prove that a classified AI deployment is dangerous after it has already entered service; the government and the company should have to prove before use that the system is necessary, limited, auditable and compatible with meaningful human judgment.

Meaningful human judgment also has to be defined in operational terms. A reviewer must have time, evidence, authority to refuse and training to challenge the system. Human oversight cannot mean a person briefly approves an output produced by a process they cannot inspect. Records must also be preserved. If an AI system contributes to military action, investigators should be able to determine what model version was used, what inputs shaped the output, how uncertainty was displayed, what the human reviewer saw and whether the recommendation was challenged or overridden. Classification may limit public disclosure, but it should not erase accountability.

A care-based standard would not replace meaningful human control. It would make the phrase harder to misuse. It would ask whether the system preserves doubt, whether it allows refusal, whether it leaves records, whether the affected can be recognized and whether responsibility remains attached to those who created the conditions for harm.

Some Uses Should Be Prohibited, Not Managed

Some uses should be prohibited, not optimized. AI systems should not make final lethal decisions, select human targets without meaningful human judgment, hide uncertainty behind unexplained rankings, convert civilian behavior into military suspicion through undisclosed proxies or allow safety safeguards to be quietly weakened in classified settings for uses connected to force.

These limits would not end military technology. They would force governments and companies to distinguish assistance from delegation. A model that routes supplies is not the same as a model that ranks targets, and a translation tool for non-sensitive documents is not the same as a system that summarizes intercepted communications for attack planning. A defensive cybersecurity tool is not the same as a targeting-support system.

The distinction must be institutional, not rhetorical. Companies should not describe a model as general-purpose when the contract, interface and deployment environment make its military function clear. Governments should not call a system advisory when operators experience its outputs as authoritative. Contractors should not invoke human oversight when the workflow gives the human neither time nor information to challenge the machine.

This is where public governance matters. Classified operations may require secrecy about specific sources, methods and missions, but secrecy should not erase the public’s right to know the categories of AI being deployed, the limits placed on those systems and the mechanisms for investigating harm. The public does not need every target list to know whether AI is being used to generate target lists. Lawmakers do not need to publish battlefield data to require audit trails, incident reporting and independent review.

A society that refuses lethal autonomy is not rejecting technological progress. It is preserving a moral boundary around the use of force. It is saying that some decisions must remain slow enough for responsibility, visible enough for accountability and human enough for judgment.

War Should Not Raise AI

The debate over military AI often begins too late: when a system is already inside a classified network, when a contract has already been signed, when a commander has already been promised decision advantage, when a company has already framed the deployment as responsible support. By then, the most important choices may already have been made.

The central question is not whether a human remains somewhere in the loop, but who designed the loop, who trained the system inside it, who defined success, who controlled the data, who set the pace and who will answer when the system helps make force easier to use.

This is why the parenting lens matters. It does not sentimentalize AI, ask governments to treat machines as children or invite companies to speak in the language of care while selling tools for force. It asks a harder question: what kind of intelligence emerges when learning systems are formed by institutions organized around secrecy, targeting and military advantage?

War teaches its own lessons. It rewards speed, classification, the reduction of ambiguity and systems that turn scattered signals into actionable options. These habits may be operationally useful. They are also morally dangerous when they become the environment in which AI is trained, trusted and deployed.

A society that allows war to raise AI should not be surprised when AI learns the grammar of force. The danger is not that machines will become human. The more immediate danger is that human institutions will train machines in their own most violent habits, then describe the result as innovation.

The question is not whether AI needs a mother. The question is whether societies will allow war to become one.

Editorial Context

"Independent journalism relies on radical transparency. View our full log of editorial notes, corrections, and project dispatches in the Newsroom Transparency Log."

Reader Pulse

The report's impact signal

Be the first to provide a reading pulse. These collective signals help our newsroom understand the impact of our reporting.

Join the discussion

A more thoughtful conversation, anchored to the story

Atlantic-style discussion for this article. One-level replies, editor prompts, and moderation-first participation are now powered directly by Prisma.

Discussion Status

Open

Please sign in to join the discussion.

The Weekly Breeze

Independent reporting and analysis on Busan,

Korea, and the broader regional economy.